A while back I wrote one post on how the overhead of logging was so minimal that the performance impact was well worth the benefits of proper logging. I also wrote another blog post a while back about how deploying your application in tmpfs or on a RAM drive basically buy you nothing. I had a conversation the other day by a person I respect (I respect any PHP developer who knows how to use strace) about the cost of file IO. My assertion has been, and has been for a long time, that file IO is not the boogeyman that it is claimed to be.

So I decided to test a cross between those two posts. What is the performance cost of writing 1,000,000 log-sized entries onto a physical file system compared to a RAM drive. As an added bonus I also wanted to show the difference between an open/write/close repeated compared to holding open a file handle and writing the log entries because I think that there is something worth learning there.

The first thing I needed to do was create my RAM drive. My first test run ran out of disk space so I had to reboot the machine with the kernel parameter ramdisk_size=512000. This allowed my RAM drive to be up to 512M (or thereabouts). Then I created my RAM drive.

1 2 3 | mke2fs -m 0 /dev/ram0 mkdir -p /ramdrive mount /dev/ram1 /ramdrive |

The code I used to test was the following PHP code.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 | $physicalLog = '/home/kschroeder/file.log'; $ramLog = '/ramdrive/file.log'; $iterations = 1000000; $message = 'The Quick Brown Fox Jumped Over The Lazy, oh who really cares, anyway?'; $time = microtime(true); for ($i = 0; $i < $iterations; $i++) { file_put_contents($physicalLog, $message, FILE_APPEND); } echo sprintf("Physical: %s\n", (microtime(true) - $time)); $time = microtime(true); for ($i = 0; $i < $iterations; $i++) { file_put_contents($ramLog, $message, FILE_APPEND); } echo sprintf("RAM: %s\n", (microtime(true) - $time)); unlink($physicalLog); unlink($ramLog); $time = microtime(true); $fh = fopen($physicalLog, 'w'); for ($i = 0; $i < $iterations; $i++) { fwrite($fh, $message); } fclose($fh); echo sprintf("Physical (open fh): %s\n", (microtime(true) - $time)); $time = microtime(true); $fh = fopen($ramLog, 'w'); for ($i = 0; $i < $iterations; $i++) { fwrite($fh, $message); } fclose($fh); echo sprintf("RAM (open fh): %s\n", (microtime(true) - $time)); |

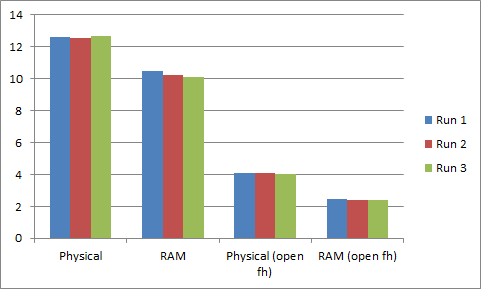

This code tests each of the four scenarios. file_put_contents to physical, file_put_contents to RAM, fwrite to physical and fwrite to RAM. The test was run three times. The test is a measure of the performance of a really crappy file system compared to RAM.

Y Axis is the number of seconds that it took to write 1,000,000 log entries. As we can see from the chart the RAM drive provided us some benefit, it was about 12% faster. But the interesting part is the last two. The physical write to the file system with an open file handle is about 2.5 times faster than writing to the RAM drive. The RAM drive, again, outperformed the physical disks by 42%.

But if you compare between the two sets of tests you will notice something interesting. While the performance difference in the latter test is 42% faster, the wall-clock time is almost the same. 2.1 seconds for file_put_contents, 1.6 for fwrite. So it would seem that the physical overhead of using the disks, per 1,000,00 log file writes is 0.00000055 seconds.

Now, I KNOW my disks are not that fast, nor is the VM that I ran the tests on. It is probably due to write caching. But that is largely irrelevant. My assertion is that the file system (not necessarily just the disk) is not your enemy.

This is an important distinction. I ran two dd commands, one to the disk with fdatasync set and one to the RAM drive with fdatasync set. The results are not surprising. 55MB per second for the disk, 290MB per second to the RAM drive. But that is not the point. The operating system, Linux in this case, does a LOT of things to make working with the physical layer as efficient as possible. Therefore, things like logging or doing other operations on the file system are not necessarily a bad thing because the actual overhead involved is minimal compared with your application logic.

Please feel free to do similar tests and post results. I would love to see data that contradicts me. That would make this a much more interesting topic of conversation. 🙂

Comments

gokulmig

Nice summary and test, It is indeed a great performance problem when using file system and that too when you write a bunch of small files from PHP. It consumes too much resources and some times gets the files system struck.

I suppose when you tested the basic file system you used Ext4, Have you tried with other types like GlusterFS.

kschroeder

gokulmig I did a similar test on Gluster a while back http://www.eschrade.com/page/testing-glusterfs-for-magento/

Stelian Mocanita

In this particular case, writing to a ram drive can not possibly be expected to bring in any huge performance boost, due to the small amount of data you are writing. If you would however be playing around with significantly larger files (e.g. generated pdf invoices) the graph would look significantly different in the second test (the one you keep the file opened).

As for the first test goes I would add some sort of a disclaimer that even though it is significantly slower, it should not be done anyway. In a production environment, such a thing could lead into a lot of issues with limits, such as fs.file-max.

With that said, a good read and I fully agree with the fact that physical storage should not be feared, you just need to know what to use, when and why.

mcouillard

Interesting! I just had to try it for myself to ensure Windows (7 64bit) wasn’t doing something terribly inferior to Linux. With fwrite() the disk completed in 2.1 seconds versus a DataRam RAMDisk time of 1.8 seconds. Not bad!